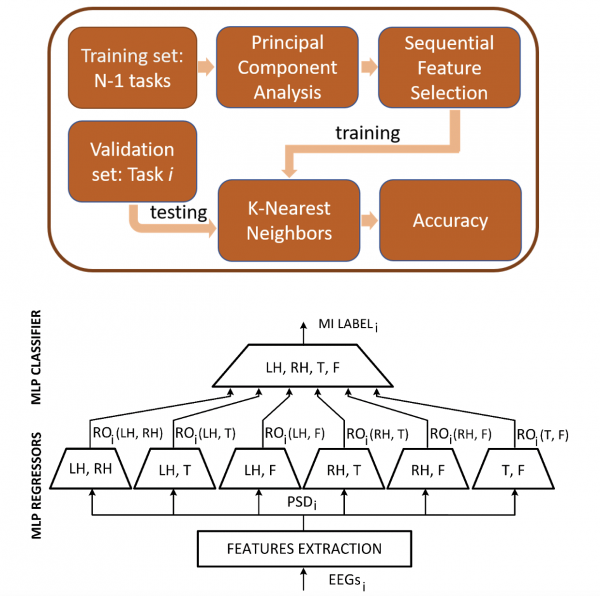

Explainable AI (XAI) is a novel branch of artificial intelligence (AI) in which the necessity of achieving excellent results by AI comes with the struggling for comprehensible explanation AI itself. XAI goes in contrasts with the common concept of machine learning models as "black box", where even the designers who engineered the model often (most often) cannot explain why an AI arrived at a specific decision. XAI algorithms are considered to follow the three principles of transparency, interpretability and explainability. Where ‘transparency’ refers to the ability to motivate and describe how the model parameters are derived, ‘interpretability’ stands for the possibility for a human to interpret the decision-making process that the AI model is performing, and, lastly, explainability can be seen as the capacity to recognize the interpretable features that led the AI model to produce a specific decision for a given example.

For example, it would be fundamental for an oncologist to have an AI model that perfectly recognize a malignant neoplasm from a benignant one, but this model would be useless if it does not allow the oncologist to recognize were the neoplasm is, and why it should be classified as malignant. In this context, we are currently focused on:

- Development of novel and specific XAI model to be applied on physiological signals and images

- Development of methodologies able to make Explainable currently available and already on-market AI models

Catrambone, V. et al. "Predicting object-mediated gestures from brain activity: an EEG study on gender differences." IEEE Transactions on Neural Systems and Rehabilitation Engineering 27.3 (2019): 411-418.